Introduction

Simply put, virtualization is an idea whose time has come. The term virtualization broadly describes the separation of a resource or request for a service from the underlying physical delivery of that service. With virtual memory, for example, computer software gains access to more memory than is physically installed, via the background swapping of data to disk storage. Similarly, virtualization techniques can be applied to other IT infrastructure layers – including networks, storage, laptop or server hardware, operating systems, and applications.

What is Virtualization?

As per Wikipedia, Virtualization refers to the act of creating a virtual (rather than actual) version of something, including virtual computer hardware platforms, storage devices, and computer network resources.

As per Redhat, Virtualization is a technology that lets you create useful IT services using resources that are traditionally bound to hardware. It allows you to use a physical machine’s full capacity by distributing its capabilities among many users or environments.

As per VMware, Virtualization is the process of creating a software-based, or virtual, representation of something, such as virtual applications, servers, storage, and networks. It is the single most effective way to reduce IT expenses while boosting efficiency and agility for all size businesses.

So in brief, virtualization is the art and science of making the function of an object or resource simulated or emulated in software identical to that of the corresponding physically realized object.

In other words, Virtualization makes the software look and behave like hardware, with corresponding benefits in flexibility, cost, scalability, reliability, and often overall capability and performance, and in a broad range of applications.

Virtualization, then, makes “real” that which is not, applying the flexibility and convenience of software-based capabilities and services as a transparent substitute for the same realized in hardware.

Example of Virtualization:

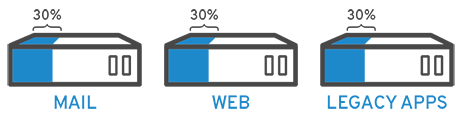

This is a good example from RedHat, imagine you have 3 physical servers with individual dedicated purposes. One is a mail server, another is a web server, and the last one runs internal legacy applications.

Each server is being used at about 30% capacity—just a fraction of their running potential. But since the legacy apps remain important to your internal operations, you have to keep them and the third server that hosts them, right?

Traditionally, yes. It was easier and more reliable to run individual tasks on individual servers:

1 server, 1 operating system, 1 task.

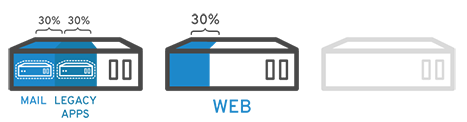

It wasn’t easy to give 1 server multiple brains. But with virtualization, you can split the mail server into 2 unique ones that can handle independent tasks so the legacy apps can be migrated. It’s the same hardware, you’re just using more of it more efficiently.

Keeping security in mind, you could split the first server again so it could handle another task—increasing its use from 30% to 60%, to 90%. Once you do that, the now-empty servers could be reused for other tasks or retired altogether to reduce cooling and maintenance costs.

What is the importance of “Virtualization”?

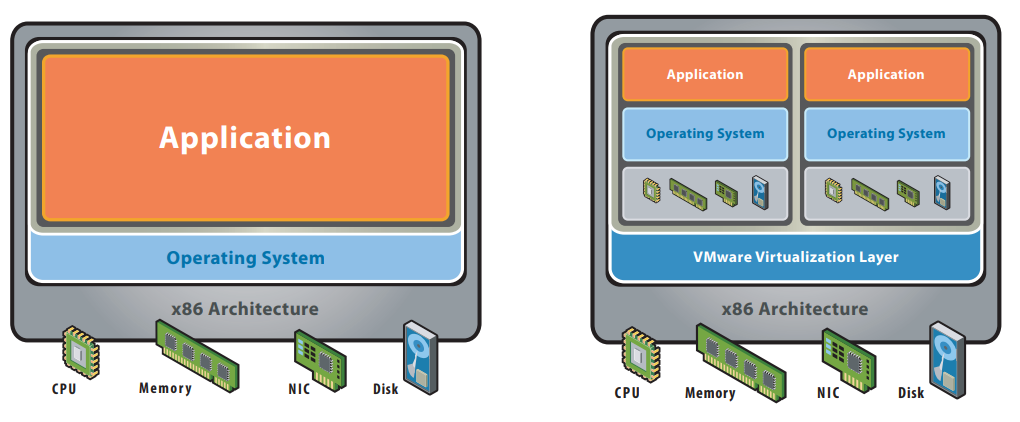

We’ll discuss the benefits of virtualization by taking two examples or make a comparison between Before and After Virtualization as below in this figure:

Before Virtualization:

- Single OS image per machine.

- Software and hardware tightly coupled.

- Running multiple applications on the same machine often creates conflict.

- Underutilized resources.

- Inflexible and costly infrastructure.

After Virtualization:

- Hardware-independence of operating system and applications.

- Virtual machines can be provisioned to any system.

- Can manage OS and application as a single unit by encapsulating them into virtual machines.

So if you don’t understand well “Virtualization”, please write your question in the comment section and I’d be happy to answer. Stay tuned for the next article about ” Hypervisor”

Resources:

- Wikipedia.

- VMware.

- RedHat.